Like many industries, the retail sector is currently riding the wave of hype surrounding GenAI, with eCommerce brands trialing to replace existing production processes. Yet, we observe an internal debate between the value that the technology brings (reduced costs and faster time to market to name a few), and the compromise brands feel they have to make on quality, often leading to long and difficult decision making processes, ultimately delaying the unlocking of the expected benefits of AI-powered production.

This is however a false binary. Brands don't have to choose between AI production and studios.

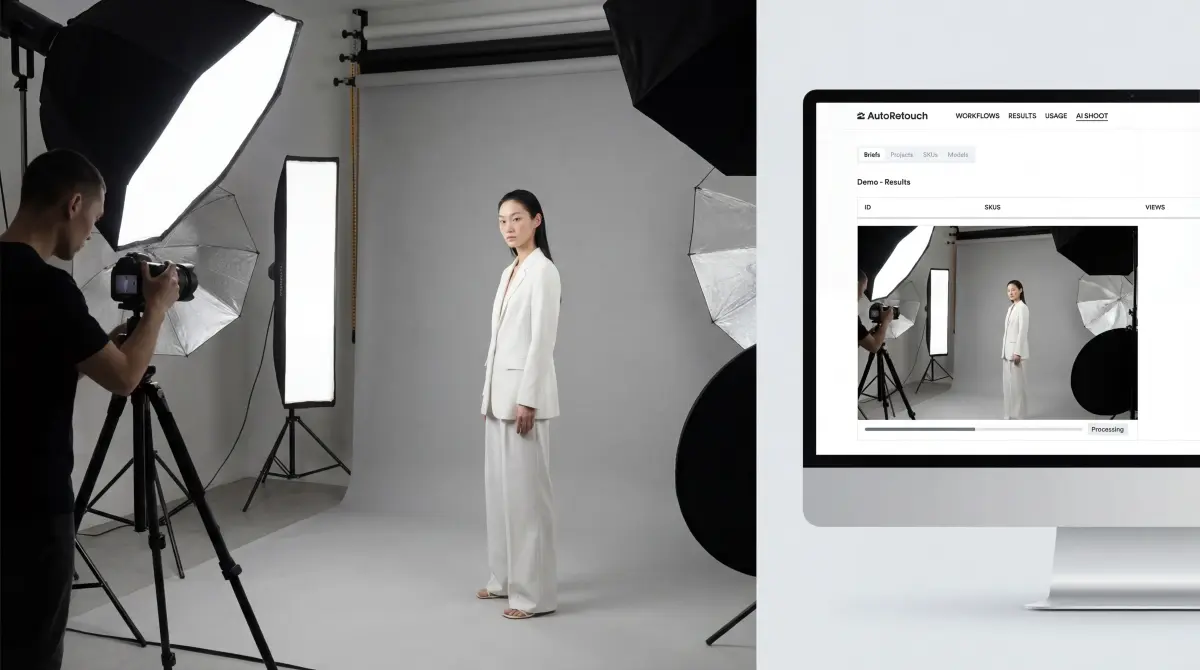

They can and should do both, through a single pipeline. This is what healthy AI adoption looks like.

In 2026, the question isn't "should we use AI for visual production", but more, "which products should we shoot in studios, and which ones should we produce with AI".

AI-powered production is actually a new way to fight an old problem: scaling shooting throughput without costs shooting up.

The everlasting scale problem studios alone can't solve

Mid-size fashion retailers drop 5-10k new SKUs per season. Each SKU needs multiple visuals; on-model, packshot, detail, lifestyle and this across multiple channels with different specs. That's tens of thousands of assets per drop. We're not even talking about video, 3D assets or product descriptions.

Traditional production was designed for a world with fewer SKUs, and fewer channels. A world that could be Excel-powered. But there are never enough studio days, never enough models, and physical constraints at every step of the process when a brand needs to scale its visual production.

If there is a challenge around scaling, there isn't one about quality. Studios produce beautiful work, and remain the #1 way to produce high-quality and bespoke content to this day. It's an approach, a throughput and an economics problem, which is the reason AI entered the conversation in the first place: producing at scale is a major challenge and cost-center for brands.

What AI does well today

There is a detail the industry tends to overlook. AI outputs are predictions, and predictions can be wrong. AI works a lot of the time, and is an amazing lever to deliver high-volume and consistent production through a scalable process. Actually, AI-powered fashion visual production is now notoriously strong at:

- Product image to on-model visuals: this is the core Gen AI use case for fashion ecommerce. Using existing flatlays, mannequin shots or ghost mannequins for the production of on-model images is now a solved problem and the best way to unlock the expected benefits from AI.

- Automated post-production: Background removal, shadow generation, cropping to channel specs, color harmonization, skin retouching. Tasks that used to take a retoucher minutes per image now process in seconds across hundreds of images in parallel. This has been the expertise of AutoRetouch since launch.

- Video production from images: Turning static product images into short form PDP-ready video now allows brands to introduce video to new categories, or references that wouldn't have had videos before. It's a game changer in the world of production as videos are the most expensive and challenging asset to produce. Yet, the assets that make a real difference on PDP performance.

The real value with AI isn't in its ability to do better than traditional production -- it might at some point, but this point isn't reached -- what matters is its ability to be part of a system. Part of a pipeline that never sleeps. If you take a single studio image, its quality will beat the one of an AI image, probably not to consumers in most cases, but to those who have taste, and decide how brands are embodied online. But, the value of AI here isn't about beating real images at quality. It's about repeating the best quality possible at scale, for a fraction of the cost, unlocking new capabilities and allowing to shift significant production flows to it.

What AI still can't replace

AI is a game changer to produce on-model images at scale, but not for every use case. We still observe major blockers for some applications of Gen AI for visual production, namely:

- Hero and campaign imagery: When a brand launches a new season, the creative direction, styling, set design, and model casting for hero shots still demand in most cases a physical shoot and the expertise of photographers, art directors & professional models. AI can replicate, but it can't originate a creative concept.

- Fabric and fit nuance at the high end: For luxury, lingerie and haute couture brands, the way a silk drapes, lace is rendered, or a structured blazer sits on a shoulder carries real commercial weight. AI is improving here, but when the tolerance for inaccuracy is near zero in a world where return rates and brand image are key, AI isn't the right production method.

- High definition garment and texture accuracy: When extreme detail views are needed, where depicting the reality is key, Gen AI struggles to deliver as by definition, it will generate new pixels, and those pixels may alter details with various degrees of intensity and consequence (incorrect logo isn't the same as slightly off seam)

- New product categories or first-ever shots: AI models are trained on existing visual patterns. When a brand introduces a genuinely new silhouette or product type, AI will often struggle to reproduce the garments the intended way. A best-selling running shoe in a new colorway? No problem. A co-branded capsule with a custom logo and embroidered detailing? That's where AI will struggle.

AI isn't failing at delivering on its promise, but it's not capable of handling the entire spectrum of product catalogues, for every product category. When the stakes and expectations are too high, studios remain the right tool. It's as true for Gen AI with on-model image production, as it is with AI editing and expert high-definition post-production that luxury brands demand. Some workloads simply aren't a good match for AI.

Acknowledging limitations doesn't invalidate the core value and capabilities of Gen AI for visual production. It does, however, raise the following question: how can brands leverage AI if it's not possible to produce images for an entire catalog? The answer fits in two words: hybrid production.

The hybrid model: how it actually works

Brands running hybrid model for their PDP content production don't make decisions image by image manually. They instead rely on systems to route their production to the appropriate production channel, based on catalog-level rules.

Depending on the brand internal acceptance of the technology (the internal debate), product categories and general requirements, this routing can be designed as such:

- Tier 1: hero products, (top 5-10% of catalog), remain fully shot in studios. Stakes are high, small details, creativity and nuance matter too much, and revenues justify the investment.

- Tier 2: product categories with challenging fabric or complex details, remain mostly shot in studios; this isn't driven by economics, but by the current tech capabilities.

- Tier 3: back catalog, b2b content, RTW basics, SKUs targeting secondary markets. These can be mostly shot on AI as the value of having a great enough image vastly outpaces the time and investment required to get a perfect one, or worse, having none.

But the real value of hybrid production goes beyond cost allocation. It adds elasticity to the production process and provides an additional way for brands to manage their production seasonality. Shifting production to AI is indeed much simpler than shifting excess production volume to a studio (new or existing). Scaling to handle peak seasons, or to mitigate production bottleneck becomes an easy operation decision instead of a new vendor to onboard, as the only real action required to make it happen is a routing rule change in the production orchestration platform.

But that's not all. Thanks to production channel routing from a single system, it becomes trivial to apply identical post-production processes to both AI and Studio production, ensuring another layer of content uniformization, streamlining operations even further and leveraging what we believe is key for PDP-readiness.

Quality gates, the only way to return PDP-ready visuals

The hybrid production process is the way to introduce or scale AI fashion models production for fashion eCommerce brands. But, it's not because part of that visual production is made with AI that checks don't matter.

Too often, AI is expected to produce perfect results, but shooting with AI is very akin to shooting in real life: multiple shots are required to get a good shot (that's one check), and shots cannot be published as is without quality checks (that's two) and a brand-grade validation step (and three). Having the right workflows in place to assess visuals' quality, select and retouch those that aren't fit for publication is instrumental to the success of any AI strategy.

Fit fidelity, color correction, skin inconsistency, background artifacts. Gen AI can produce great results, but requires oversight for results to be truly PDP or marketplace-ready. Every image, whether it came from a studio or from an AI pipeline, needs to go through quality gates before it's published. This is the step that turns AI-generated imagery from "experimental" to "production-grade", and the way production-grade AI should be delivered.

The shift to hybrid production isn't a technology decision. It's an operations decision. The technology is ready. The question is whether your production workflow is designed to use it.

If you're still debating "AI or studio," you're asking last year's question. The brands pulling ahead are asking: "Which SKUs go where, how do we connect the pipeline, and who's checking the output?"

That's the model that scales.

Book a demo to learn more.